As AI Goes Deeper, It Needs This Company’s Brain

-

Dawn Pennington

Dawn Pennington

- |

- Reality Check

- |

- July 21, 2020

Have you heard of the G-MAFIA in the markets?

This group of six “Godfathers” are among the biggest US companies that stand to benefit from the development of artificial intelligence: Google (GOOGL), Microsoft (MSFT), Amazon (AMZN), Facebook (FB), IBM (IBM), and Apple (AAPL).

Thanks in no small part to their work to advance this versatile technology, the AI market is set to explode over the coming decade.

According to Grand View Research, the market for AI will reach $733 billion by 2027. That’s a compounded annual growth rate of 42% between 2020 and 2027.

There’s plenty of room for each of these companies to get a piece of the profit pie.

But if you want to invest in the company that could become the boss in this fast-developing space, we recommend looking outside “the family.”

We’ll give you our pick in just a moment.

First, let’s look at why some of the market’s biggest innovators have their eyes on what promises to be a very big prize…

Three Phrases Every AI Investor Should Know

From autonomous cars and drones to robots and so much more of today’s cutting-edge technologies, they all share a dependence on machine vision.

That means these machines must be able to “see” to perform their tasks in the physical world.

Teaching a machine how to accurately interpret what it sees requires lots of training. That and the ability to process massive amounts of data faster than you can blink your eye.

This process is known as machine learning, and it’s part of the AI revolution.

The more they “see” and “learn,” the more demand for number-crunching power and data storage the AI demands.

Companies have responded to this demand by relocating a lot of this work to remote servers in data centers. This is known as cloud computing.

This industry is bursting with profit potential, too…

Get Off of My Cloud

The market for cloud computing and cloud storage is expected to top $1 trillion by 2027, according PR Newswire.

But there’s a catch. Using offsite data centers for data processing definitely works for some uses. But it creates a critical problem for other applications: latency.

Latency is a fancy word for the time lag between processing data and a response.

Think of the frustration when your smartphone slows down when the connection is spotty. That’s latency.

It can be inconvenient, but it can also be a matter of major consequence…

Drones, self-driving cars, industrial robots, remote medical procedures—all these devices rely on computer vision that takes in massive amounts of data that must be processed lightning-quick, with the lowest latency.

You can't achieve that if the device sends data to the cloud for processing and waits for the results. It just takes too long.

It might take less than one second longer to use the cloud. But that can be the difference between an autonomous vehicle avoiding a collision or a fatal accident.

The solution is edge computing.

And a company at the leading edge of edge computing is Nvidia (NVDA).

Closer to the Edge

To shrink latency to the smallest possible fraction of a second, networks are being built that move AI-driven processing off the cloud… and even off the internet.

Moving the data processing and storage as close as possible to the device—or even on the device itself—will radically improve response times and reduce latency.

This new configuration where computation is done at the edge of the network—where it is closer to the end user—is called edge computing.

We’ve seen the difference described as cloud computing being about big data, while edge computing is about instant data.

In the drive for instant data, Nvidia is a major player.

Meeting the Need for Optimized Speed

Nvidia is famous for its graphics cards—known as graphic processing units, or GPUs—used in computer gaming. The company has earned this fame, dominating the market for computer graphics cards with a 68.9% market share, according to Wccftech.

But there is a lot more to GPUs than video games.

A GPU is a specialized processor, built with the ability to quickly process an astronomical amount of data. Some of Nvidia’s chips can process 10,000 trillion operations per second—an incomprehensibly large number.

How is that even possible? Let’s compare a GPU to a CPU (central processing unit, the brain of a computer).

In very basic terms, a CPU can execute instructions faster and perform a wider range of tasks. But it can do a smaller number of them simultaneously.

A GPU, on the other hand, might be slower at executing instructions, but it can perform thousands of them at the same time.

It achieves this speed because its GPUs are optimized for processing images only, and that’s its advantage.

This massive amount of computing power makes Nvidia’s GPUs ideal for AI.

How to Beat the G-MAFIA

In 2019, Nvidia launched its EGX platform, which is basically a complete AI brain available with varying computing power.

According to estimates from Tractica…

The AI chipset market will rocket from $11 billion annual sales in 2019 to $71 billion in 2025.

There are other microchip manufacturers developing AI products. But few can match Nvidia’s technology. Even Intel (INTC), which dominates the CPU market, has struggled to enter the GPU market.

That is why Microsoft partnered with Nvidia for its machine learning platform. The two companies also announced a collaboration for edge computing.

Nvidia has partnerships with Toyota (TM), Mercedes-Benz (DDAIF), and Volkswagen (VWAGY). Plans include using Nvidia’s technology to enable all Mercedes-Benz cars to drive autonomously and be perpetually upgraded through machine learning.

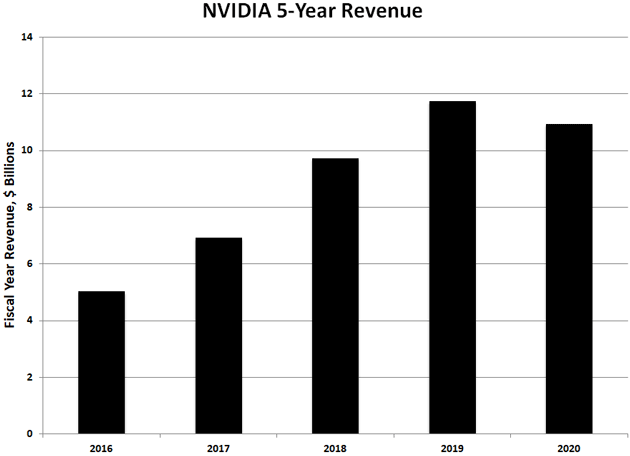

Nvidia has grown its revenue an average of 16% annually for the past five years.

Source: Macro Trends

The computer gaming segment accounts for much of that growth, and it shows no sign of slowing down.

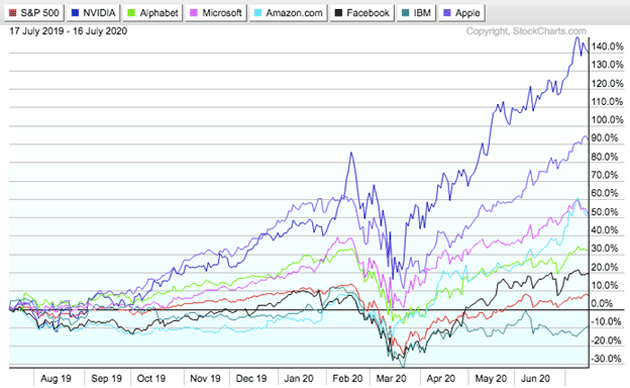

Over the past year, Nvidia stock has handily beaten the G-MAFIA stocks.

That trend doesn’t show much sign of slowing down, either.

Source: StockCharts

At just around $415 a share, NVDA stock is not cheap. But it’s a growth stock in the tech sector, where stocks often carry a premium compared to the broader market.

We suspect that investors are already pricing in the future growth of Nvidia’s GPU sales in the AI market on top of strong gaming market sales.

One thing is clear: We are entering a new era of AI. And we might be wise to take the profit opportunities and the cannoli!

Tell us: What advancements in AI are you most excited about?

Your Questions Answered

Our Reality Check readers give us a lot to smile and think about. Here are a few letters we received after last week’s discussion about a potential moonshot moment for solar…

Ted N. wrote shortly after our article published:

We actually have a meeting with a Tesla (TSLA) rep this afternoon to look at our home in Tennessee. … I have better questions to ask him after reading your article.

John P. says:

[I installed a] 10kw system 18 months ago. Works like a champ. Battery storage not especially useful because it can't start A/C. In a hurricane location, that's a problem. Solve that and you have a winner.

Andy writes:

I'm a big fan of Solar PV and I was happy to read your coverage today. Microinverters have been around for a long time; I have one system using them for the past five years. If you look on eBay, there are many brands available. For me, advancement in battery storage at reasonable cost is the next step forward. Keep up the great work.

Bill L. asks:

Any thoughts on how well Enphase has protected its moat with patents?

RC: Hi Bill, great question and thanks for sending it to us. Enphase has a large stable of patents that you can take a look at here. Some highlights:

- In 2014, Enphase expanded its portfolio to 70+ when it purchased patents from Sanyo Electric Co.

- In 2018, Enphase added another 140+ patents to its IP moat when it acquired SunPower Corp. for $25 million.

- In July 2019, the company reached another milestone, having shipped 20 million of its microinverters and holding 330+ patents.

Today, Enphase has 370+ active patent families around the globe, and more are pending. The company has a history of aggressively guarding its tech innovations and competitive position via patents.

Jim H. notes:

Solar Edge offers panel-independent output and is higher-efficiency than the Enphase inverters. That said, AC microinverters are a better technology.

RC: Thanks for your note, Jim. Solar Edge does have a system that allows panels to operate in series. So if one stops working, the rest are not affected. But they still connect to a single inverter. So it still has the reliability and efficiency disadvantages associated with that.

Ted, John, Andy, Bill, Jim, and everyone who wrote in this week, thank you so much for continuing the discussion. We love hearing from our readers, and look forward to the chance to connect.

What’s on your mind? Tell us here.

Dawn Pennington

Dawn Pennington